A web crawler is known as a robot or spider. It is widely used by search engines to discover new or updated content on the web.

A web crawler starts by collecting a few web pages and then follows links on those pages to collect new content.

- Given a set of URLs, download all the web pages addressed by the URLs

- Extract URLs from these web pages

- Add new URLs to the list of URLs to be downloaded. Repeat these 3 steps.

Uses:

- Search Engine Indexing

- Web Archiving

- Web Mining

- Web Monitoring

Clarifying Questions

- What is the main purpose of the crawler?

- How many web pages does the web crawler collect per month?

- What content types are included?

- Should we consider newly added or edited web pages?

- Do we need to store HTML pages crawled from the web?

- How do we handle web pages with duplicate content?

Answers:

- Search Engine Indexing

- 1 billion pages

- HTML only

- Yes, we should consider newly added or edited web pages

- Yes, up to 5 years

- Pages with duplicate content should be ignored

Non-Functional Requirements

- Scalability: The web is very large. There are billion of pages. Web crawling should be extremely efficient using parallelization.

- Robustness: Crawler should handle bad HTML, unresponsive servers, crashes, malicious links, etc.

- Politeness: The crawler should not make too many requests to a website within a short time interval.

- Extensibility: The system is flexible so that minimal changes are needed to support new content types. If we want to crawl image files in the future, we should not need to redesign the entire system.

Back of the envelope estimation

Assume 1 billion web pages are downloaded every month.

Assume the average web page size is 500k.

Assuming data are stored for five years

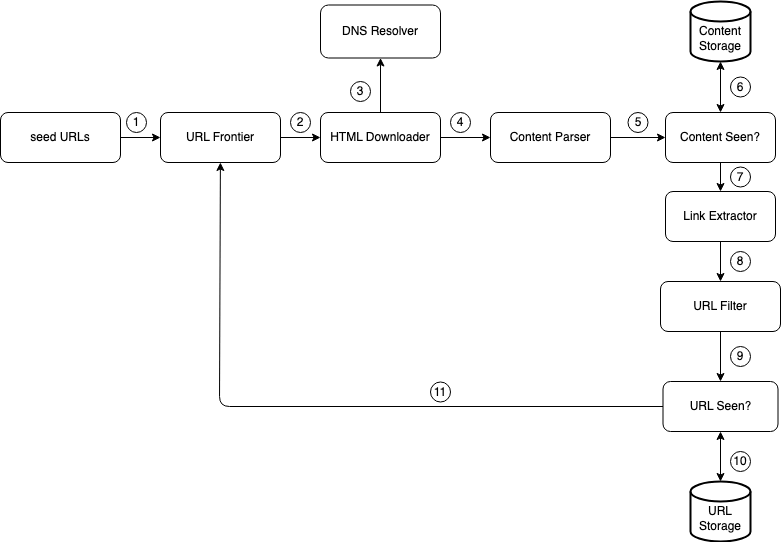

High-Level Design

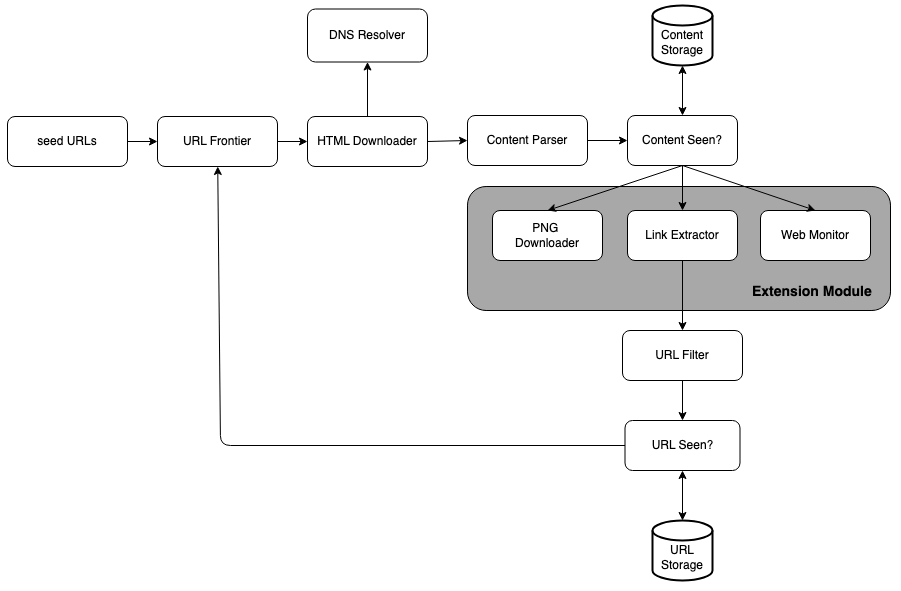

Seed URLs

A web crawler uses seed URLs as a starting point for the crawl process.

A good seed URL serves as a good starting point that a crawler can utilize to traverse as many links as possible.

A general strategy is to divide the entire URL space into smaller ones. For example, countries.

Another way is to choose seed URLs based on topics: shopping, sports, healthcare, etc.

URL Frontier

Most modern web crawlers split the crawl state into two:

- to be downloaded

- already downloaded.

The component that stores URLs to be downloaded is called the URL Frontier. You can refer to this as a First-in-First-out (FIFO) queue.

HTML Downloader

The HTML downloader downloads web pages from the internet. Those URLs are provided by the URL Frontier.

DNS Resolver

To download a web page, a URL must be translated into an IP address. The HTML Downloader calls the DNS Resolver to get the corresponding IP address for the URL.

Content Parser

After a web page is downloaded, it must be parsed and validated because malformed web pages could provoke problems and waste storage space. Implementing a content parser in a crawl server will slow down the crawling process. Thus, the content parser is a separate component.

Content Seen?

29% of the web pages are duplicated contents.

We introduce the “Content Seen?” data structure to eliminate data redundancy and shorten processing time. It helps to detect new content previously stored in the system. To detect duplicates, we can compare the hash values of the two web pages

Content Storage

It is a storage system for storing HTML content.

- Most of the content is stored on disk because the data set is too big to fit in memory.

- Popular content is kept in memory to reduce latency.

URL Extractor

URL Extractor parses and extracts links from HTML pages.

URL Filter

The URL filter excludes certain content types, file extensions, error links and URLs in “blacklisted” sites.

URL Seen?

“URL Seen?” is a data structure that keeps track of URLs that are visited before or already in the Frontier.

“URL Seen?” helps to avoid adding the same URL multiple times as this can increase server load and cause potential infinite loops.

Common techniques to implement the “URL Seen?” component are:

- Bloom filter

- Hash table

URL Storage

URL Storage stores already visited URLs.

- Add seed URLs to the URL Frontier

- HTML Downloader fetches a list of URLs from URL Frontier.

- HTML Downloader gets IP addresses of URLs from DNS resolver and starts downloading.

- Content Parser parses HTML pages and checks if pages are malformed.

- After content is parsed and validated, it is passed to the “Content Seen?” component.

- “Content Seen” component checks if a HTML page is already in the storage.

- If it is in the storage, this means the same content in a different URL has already been processed. In this case, the HTML page is discarded.

- If it is not in the storage, the system has not processed the same content before. The content is passed to Link Extractor.

- Link extractor extracts links from HTML pages.

- Extracted links are passed to the URL filter.

- After links are filtered, they are passed to the “URL Seen?” component.

- “URL Seen” component checks if a URL is already in the storage, if yes, it is processed before, and nothing needs to be done.

- If a URL has not been processed before, it is added to the URL Frontier.

Design Deep Dive

Depth-first search (DFS) vs Breadth-first search (BFS)

You can think of the web as a directed graph where web pages serve as nodes and hyperlinks (URLs) as edges. The crawl process can be seen as traversing a directed graph from one web page to others.

DFS is not a good choice because the depth of DFS can be very deep

BFS is commonly used by web crawlers and is implemented by a first-in-first-out (FIFO) queue (URLs are dequeued in the order they are enqueued).

BFS has two problems:

- Multiple requests in parallel to the same server

- Most links from the same web page are linked back to the same host. When the crawler tries to download web pages in parallel, Wikipedia servers will be flooded with requests. This is considered as “impolite”.

- No priority

- every page is considered the same. If we want to prioritize based on page ranks, web traffic, update frequency, it is not possible

URL Frontier

URL frontier helps to address these problems. A URL frontier is a data structure that stores URLs to be downloaded.

The URL frontier is an important component to ensure:

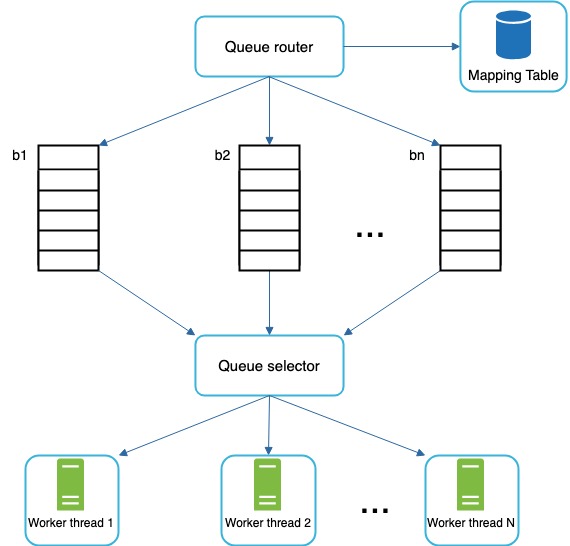

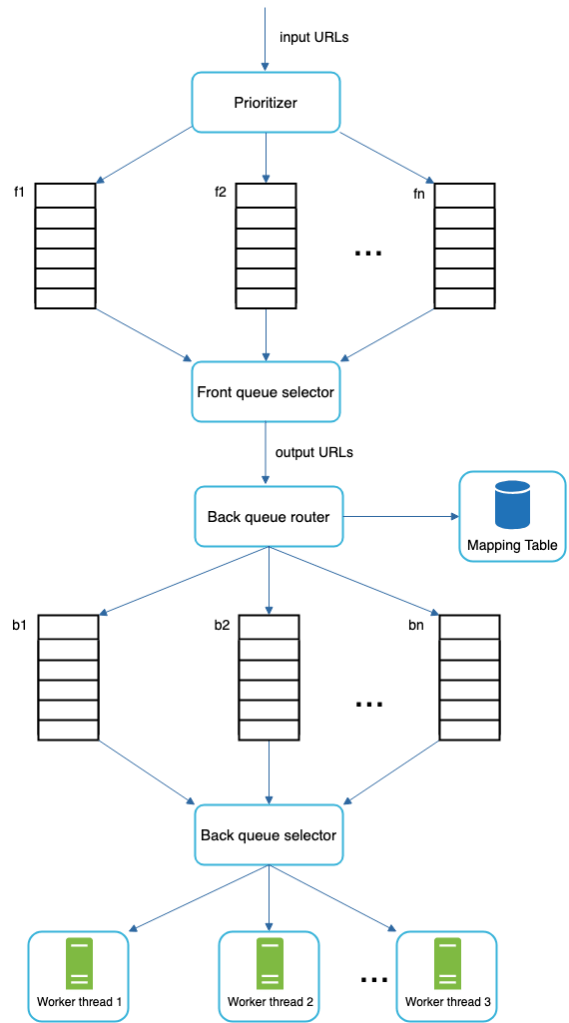

Politeness

- A web crawler should avoid sending too many requests to the same hosting server within a short period.

- The general idea of enforcing politeness is to download one page at a time from the same host.

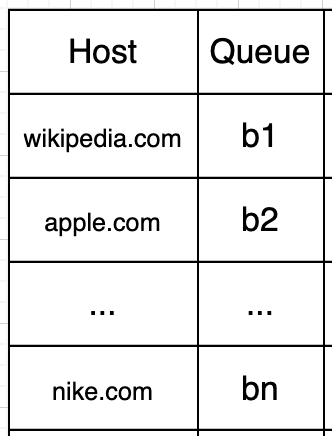

- Queue router: It ensures that each queue (b1, b2, … bn) only contains URLs from the same host.

- Mapping table: It maps each host to a queue.

- FIFO queues b1, b2 to bn: Each queue contains URLs from the same host.

- Queue selector: Each worker thread is mapped to a FIFO queue, and it only downloads URLs from that queue. The queue selection logic is done by the Queue selector.

- Worker thread 1 to N. A worker thread downloads web pages one by one from the same host. A delay can be added between two download tasks.

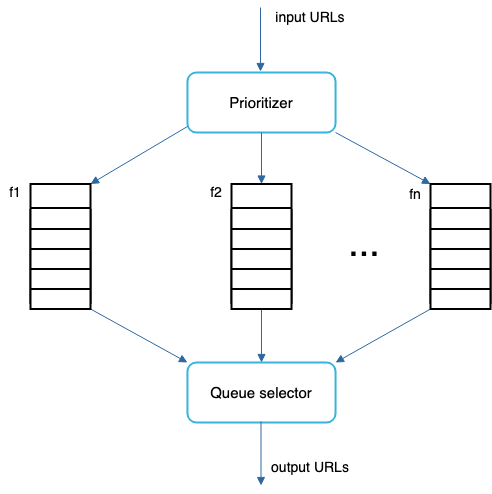

URL prioritization

- Prioritizer is the component that handles URL prioritization.

- We prioritize URLs based on usefulness, which can be measured by PageRank, website traffic, update frequency, etc.

- Prioritizer: It takes URLs as input and computes the priorities.

- Queue f1 to fn: Each queue has an assigned priority. Queues with high priority are selected with higher probability.

- Queue selector: Randomly choose a queue with a bias towards queues with higher priority.

URL prioritization + Queue Selector

- Front queues: manage prioritization

- Back queues: manage politeness

Freshness

- Web pages are constantly being added, deleted, and edited. A web crawler must periodically recrawl downloaded pages to keep our data set fresh.

- Strategies to optimize freshness are:

- Recrawl based on web pages’ update history.

- Prioritize URLs and recrawl important pages first and more frequently.

Storage for URL Frontier

In real-world crawl for search engines, the number of URLs in the frontier could be hundreds of millions.

- Putting everything in memory is neither durable nor scalable.

- Keeping everything in the disk is undesirable neither because the disk is slow; and it can easily become a bottleneck for the crawl.

We adopted a hybrid approach. The majority of URLs are stored on disk, so the storage space is not a problem. To reduce the cost of reading from the disk and writing to the disk, we maintain buffers in memory for enqueue/dequeue operations. Data in the buffer is periodically written to the disk.

HTML Downloader

The HTML Downloader downloads web pages from the internet using the HTTP protocol.

Robots Exclusion Protocol is a standard used by websites to communicate with crawlers.

- It specifies what pages crawlers are allowed to download.

- Before attempting to crawl a web site, a crawler should check its corresponding robots.txt first and follow its rules.

- To avoid repeat downloads of robots.txt file, we cache the results of the file.

Performance optimization

Distributed crawl

To achieve high performance, crawl jobs are distributed into multiple servers, and each server runs multiple threads. The URL space is partitioned into smaller pieces; so, each downloader is responsible for a subset of the URLs.

Cache DNS Resolver

DNS Resolver is a bottleneck for crawlers because DNS requests might take time due to the synchronous nature of many DNS interfaces.

Maintaining our DNS cache to avoid calling DNS frequently is an effective technique for speed optimization.

Our DNS cache keeps the domain name to IP address mapping and is updated periodically by cron jobs.

Locality

Distribute crawl servers geographically. When crawl servers are closer to website hosts, crawlers experience faster download time.

Short timeout

Some web servers respond slowly or may not respond at all. To avoid long wait time, a maximal wait time is specified. If a host does not respond within a predefined time, the crawler will stop the job and crawl some other pages.

Robustness

- Consistent hashing: This helps to distribute loads among downloaders. A new downloader server can be added or removed using consistent hashing.

- Save crawl states and data: To guard against failures, crawl states and data are written to a storage system. A disrupted crawl can be restarted easily by loading saved states and data.

- Exception handling: Errors are inevitable and common in a large-scale system. The crawler must handle exceptions gracefully without crashing the system.

- Data validation: This is an important measure to prevent system errors.

Extensibility

Goal: Make the system flexible enough to support new content types

Solution: The crawler can be extended by plugging in new module

Detect and avoid problematic content

- Problem: Redundant content

- Solution: Hashes or checksums help to detect duplication

- Problem: Spider traps

- Solution: Manually verify and identify a spider trap, and either exclude those websites from the crawler or apply some customized URL filters.

- Problem: Data noise

- Solution: Some of the contents have little or no value, such as advertisements, code snippets, spam URLs, etc. Those contents are not useful for crawlers and should be excluded if possible.